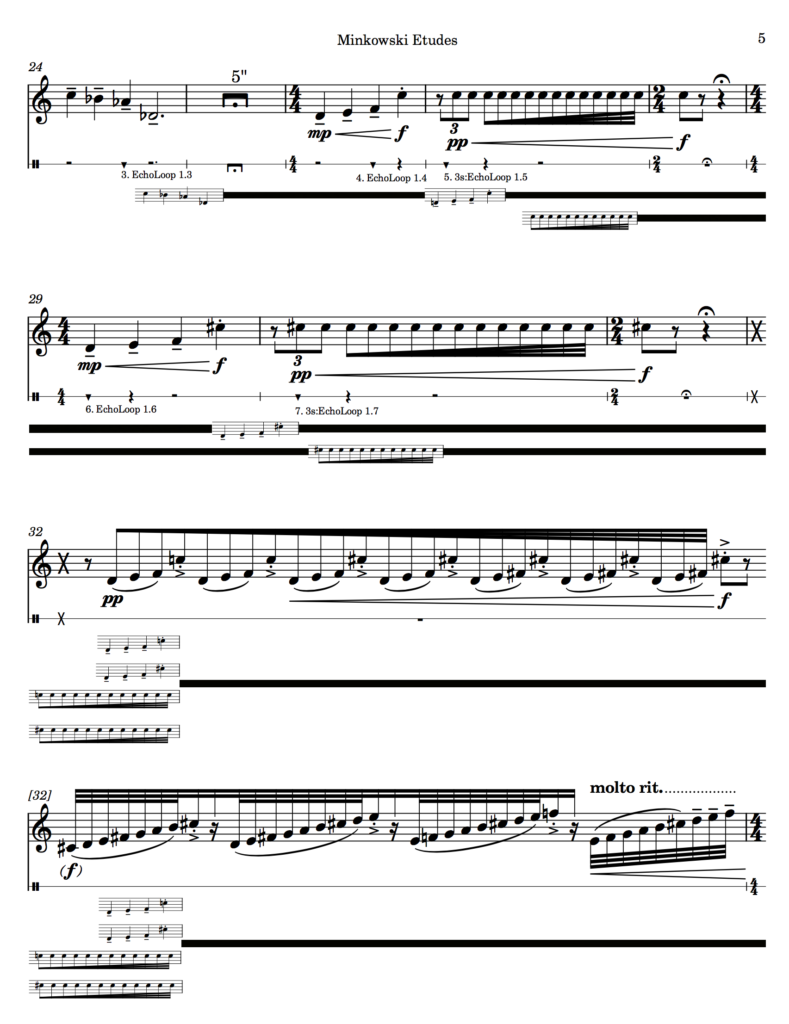

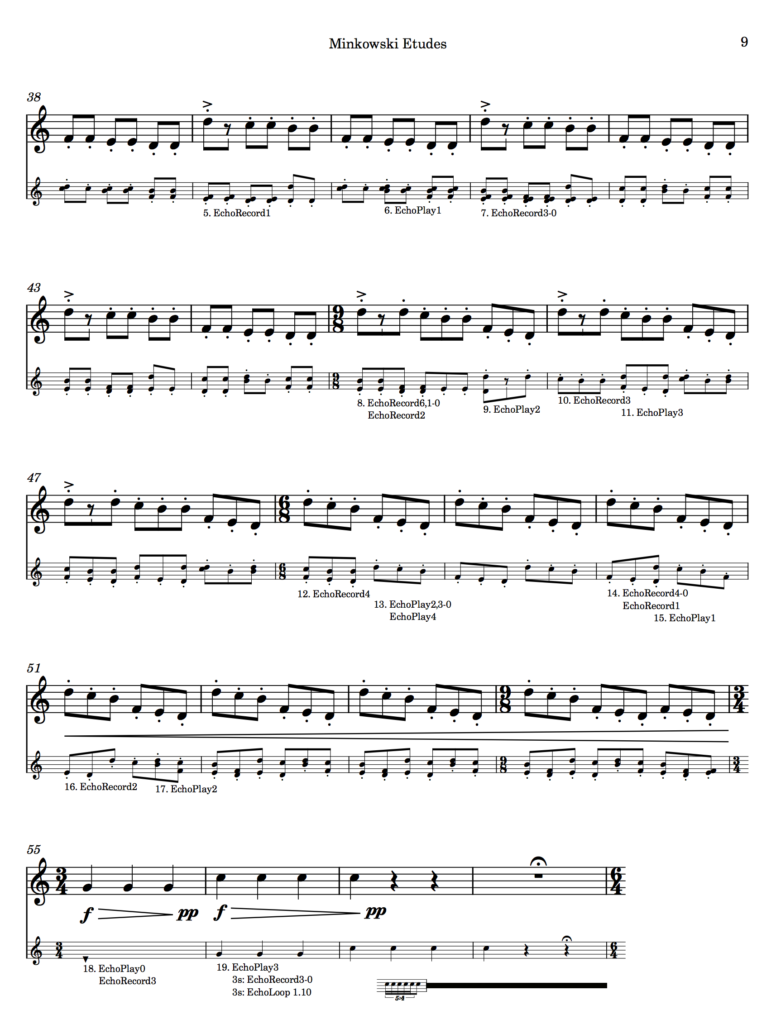

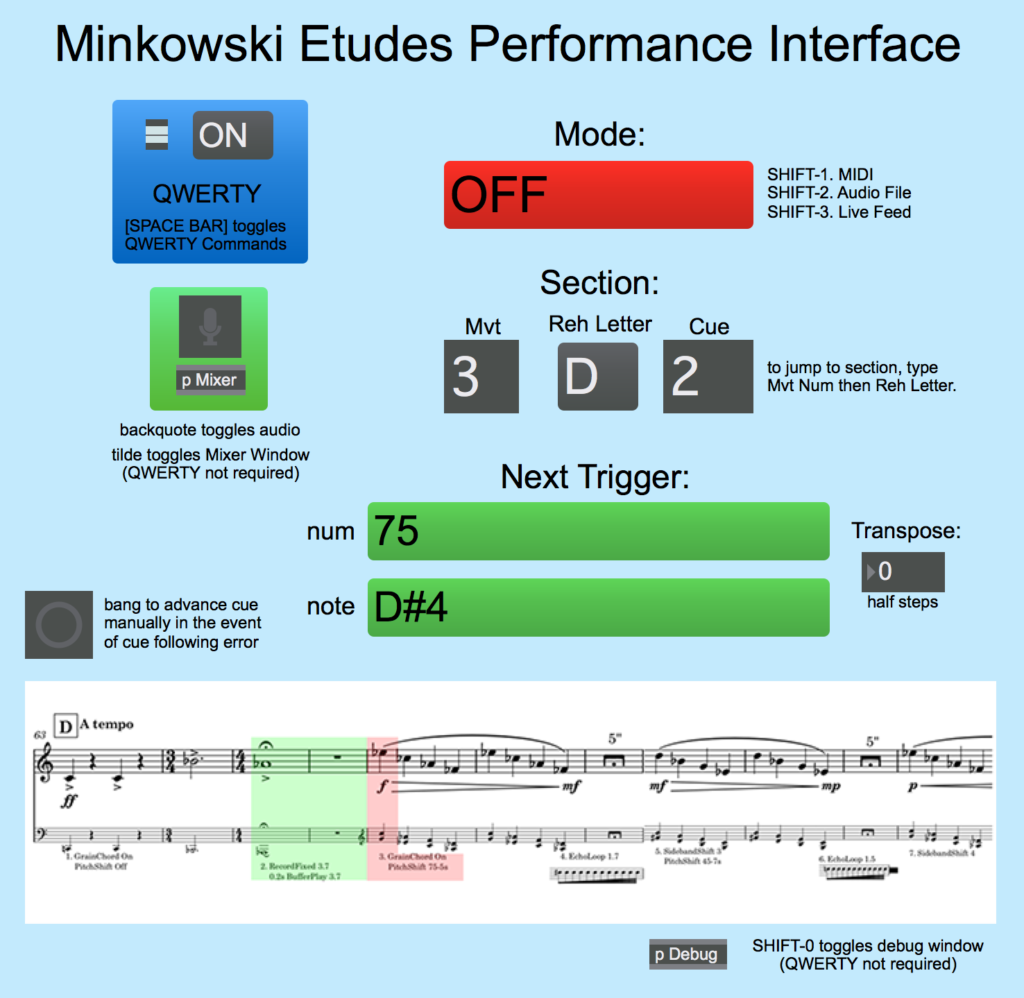

It was about one year ago when I made the decision to write Minkowski Etudes as a work for solo trumpet and interactive electronics. Last week my performer Dylan premiered it in its entirety for his senior recital and he also played it as a part of the Southern Sonic Festival. The Max programming needs some final tweaking and I may want to redo my cue structure by using Antescofo (I have to decide if I want to pay for the annual Ircam fee), but given that a bulk of the creative, notational, and programming work is now complete, I thought I’d write a quick retrospective about it.

First off, I found it fascinating to get different people’s reactions to it during its premiere performances, primarily which parts of the piece that they liked the most. It was spread pretty evenly across all three movements and all for different reasons, and I think that makes the piece a success because different parts can appeal to a wide variety of people.

First off, I found it fascinating to get different people’s reactions to it during its premiere performances, primarily which parts of the piece that they liked the most. It was spread pretty evenly across all three movements and all for different reasons, and I think that makes the piece a success because different parts can appeal to a wide variety of people.

At this point for me personally, I find that I dislike the second movement the most. Part of that comes from some of the technical difficulties in error-free execution – the primary reason I want to potentially use Antescofo in the first place – but another part is that the metronomic nature of the movement means that it’s structurally the most rigid and inflexible, limiting the performer’s ability to add their own personal musical expression and leaving little margin for mistakes in execution. In the first and last movement, the electronics are the vehicle for the performer being the forefront, whereas in the second movement, the performer ends up being a vehicle of the electronics as a forefront. That feels counter to why the piece is in an interactive form in the first place – if i wanted it to be like that, I would have just created a tape accompaniment for the performer to play to. I’m not sure what to do about that given the nature of the material, but it’s something I’m thinking about as a consideration for use of that mechanic for future works.

The amount of work I put into the Max programming was a significant time chunk, although I would say that the time invested was well worth it – I learned a lot about MSP, a side of Max that I had never really used before, and all of the patches I created that went into this project can be more easily reused and adapted for future works as opposed to having to start from scratch. Even so, it’s worth remembering that creating a work that involves interactive electronics with the kind of attention to detail that I require as a fairly detail-oriented musician and a programmer doubles or more than doubles the amount of time and energy that I would put into any other kind of composition.

That might seem like something that would discourage me, but it actually does quite the opposite. The work I did on this project and the passion I had and still have for its final outcome has helped me realize that I think I have a lot of unique things to say in the interactive electronic medium that could have a lot of legs for my compositional career. I’m hoping that after tweaking the Max programming to make it as error free as possible, I can get this performed in Oregon and Pennsylvania with my alma mater universities, but I also have ambitions to publish this work and have it potentially played by other trumpet performers. If that happens, that could encourage me to devote more energy to the interactive electronic space as well as open up future opportunities and commissions for those that might come across this work and find it valuable.

Where I go from here immediately will take shape in the next few years. I’m close to closing a commission to write a wind ensemble piece for the spring of 2020 here at Tulane, which will be the first time I’ll have written for a large concert ensemble since beauty…beholder back in 2012. I’m also close to closing a commission deal to write a percussion duet for the 2018-2019 school year. That will likely be purely acoustic, as I already have a few conceptual ideas that are best fit in the purely acoustic space.

Where I go from here immediately will take shape in the next few years. I’m close to closing a commission to write a wind ensemble piece for the spring of 2020 here at Tulane, which will be the first time I’ll have written for a large concert ensemble since beauty…beholder back in 2012. I’m also close to closing a commission deal to write a percussion duet for the 2018-2019 school year. That will likely be purely acoustic, as I already have a few conceptual ideas that are best fit in the purely acoustic space.

After that, I have the framework for a piece that I was originally going to be make as a standalone digital audio piece that I’m now inclined to make a work for solo cello and interactive electronics, specifically for my colleague Elise who plays with me as a part of Versipel New Music. I originally wanted to do that next year, but given the scale of the wind ensemble piece, i’m now thinking that I’ll have to put that off until the fall of 2020 or the spring of 2021. I’ve also been having some initial talks with a dancer/choreographer to maybe do some collaborative work with her and interactive video. That has no timeline, but given that I would have to spend time learning how to use Jitter, I imagine that that would have to be 2021 or later.

The other thing that I’m thinking about is taking the concepts that I’ve put into Minkowski and turning it into a series of pieces – using similar interactive and creative concepts and some of the same Max work for other instruments in the same way as Erin Gee’s Mouthpiece series or Berlioz’s Sequenza series. It would be a lot of fun to write a Minkowski for percussion and another one for clarinet. We’ll see what happens as I let this piece germinate and start to market it. If people want to play it and it’s received well, then it will definitely happen.

Some of the Max programming mechanics that I’ve done for this work have been put into my Kaizen YouTube series, and I’ll be posting up at least one more video that talks about it in the near future. For now, below is the most recent that talks about my custom interactive cue engine.